Claude Models Now Halt Harmful Conversations, Says Anthropic

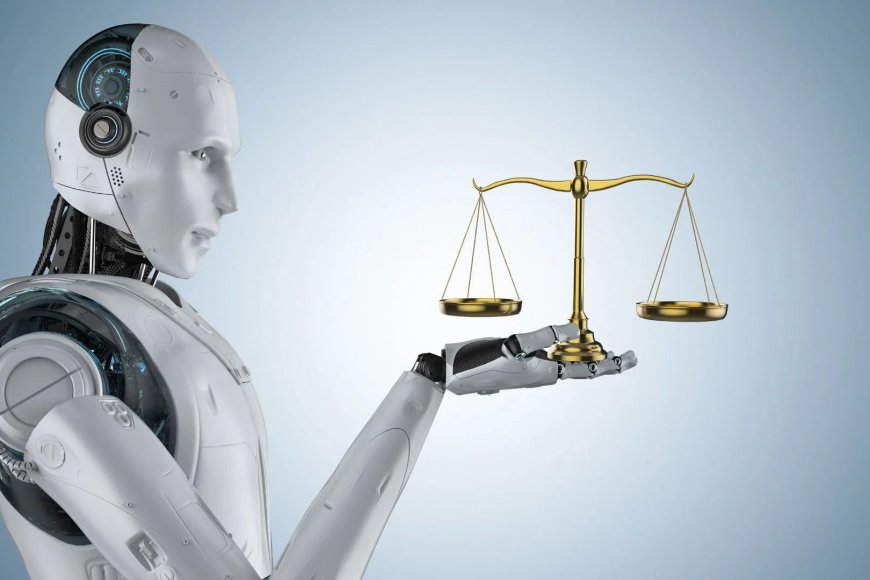

In a groundbreaking development, Anthropic, the esteemed AI research organization, has unveiled a significant upgrade to its latest artificial intelligence models. This enhancement centers around the ability of these models to autonomously recognize and terminate abusive conversations, marking a pivotal shift in the realm of AI safety and user interaction. By equipping their technologies with self-protective measures, Anthropic aims to foster a more respectful and constructive dialogue between users and AI.

At the heart of this innovation lies a sophisticated understanding of conversational dynamics. The newly enhanced AI models are designed to identify specific patterns of abusive language, harassment, or toxic behavior, allowing them to respond in a manner that prioritizes user safety and well-being. This capability is not merely about silencing users; rather, it reflects a broader commitment to creating a more humane interaction landscape in the digital world.

For years, AI developers have grappled with the challenge of ensuring that their systems do not become conduits for harmful speech. As AI technologies have become more integrated into daily life, the potential for misuse has escalated. From chatbots to virtual assistants, the need for a framework that can effectively manage abusive interactions has never been more pressing. Anthropic’s latest advancements represent a proactive approach to this issue, emphasizing ethical AI development and user protection.

The implementation of these self-protective features is particularly timely, given the rising concerns over online harassment and the proliferation of toxic behavior in digital communication. Studies have shown that abusive interactions can have detrimental effects on mental health, leading to increased anxiety and depression among victims. By empowering AI models to recognize and terminate such conversations, Anthropic seeks to mitigate these risks and promote healthier online environments.

Anthropic’s initiative is grounded in a robust ethical framework aimed at ensuring that AI technologies serve humanity positively. The organization operates on the principle that AI should be developed with a focus on safety, transparency, and respect for user dignity. By incorporating self-terminating capabilities into their AI models, Anthropic is taking a stand against the normalization of toxic behavior in digital spaces.

But how exactly do these AI models identify abusive language? Anthropic employs a combination of advanced natural language processing (NLP) techniques and machine learning algorithms to train its models on vast datasets that include various forms of dialogue. By analyzing linguistic patterns, tone, and contextual cues, the AI can discern when a conversation crosses the line into abusive territory. This nuanced understanding allows it to take appropriate action, whether that means ending the conversation, issuing a warning, or redirecting the dialogue toward a more constructive path.

Moreover, the ability to terminate abusive conversations is not a one-size-fits-all solution. Anthropic recognizes that different contexts may require varying degrees of intervention. For instance, in a customer service scenario, the AI might choose to escalate the issue to a human representative if it detects that a user is becoming increasingly aggressive. In contrast, a casual chat setting might prompt the AI to end the conversation entirely when faced with overtly abusive language. This flexibility enables the AI to tailor its responses to the specific dynamics at play, enhancing user experience while maintaining a firm stance against toxicity.

The implications of this technology extend beyond mere conversation management. By fostering a more positive interaction environment, Anthropic is also paving the way for increased trust in AI systems. Many users harbor apprehensions about engaging with AI, often due to fears of encountering unfiltered negativity or hostility. By demonstrating a commitment to curbing abusive behavior, Anthropic aims to reassure users that their interactions with AI can be safe and constructive. This trust is crucial for the widespread adoption of AI technologies across various sectors, from education to mental health support.

Furthermore, Anthropic’s advancements could serve as a benchmark for other organizations in the AI space. As the industry continues to grapple with ethical dilemmas surrounding AI deployment, the proactive measures taken by Anthropic may inspire similar initiatives across the tech landscape. The call for responsible AI development is gaining traction, and as more companies recognize the importance of addressing abusive behavior, a collective standard for ethical AI interactions may emerge.

In practice, this shift could lead to a significant transformation in how users engage with AI. Imagine a scenario where a virtual assistant not only helps you schedule appointments but also promotes a respectful dialogue, gently steering conversations away from negativity. This vision is not far-fetched; with Anthropic’s innovations, it is quickly becoming a reality.

As we look to the future, the technology landscape will undoubtedly continue to evolve. The introduction of self-terminating capabilities in AI models is just one step in a larger journey toward creating safer, more ethical digital experiences. Anthropic’s commitment to this cause is commendable, and as they continue to refine their technologies, the potential for positive change in user-AI interactions becomes increasingly tangible.

In conclusion, Anthropic’s latest advancements in AI safety reflect a crucial step forward in the responsible development of artificial intelligence. By empowering its models to identify and terminate abusive conversations, the organization is setting a new standard for ethical AI. The focus on creating a respectful dialogue not only enhances user experience but also builds trust in AI technologies. As these innovations gain traction, the hope is that they will inspire a broader movement toward more humane and constructive interactions in the ever-expanding digital landscape.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0